A Claude Code agent can do real work now. It can read a repo, edit files, run tests, recover from errors, and hand you a pull request that looks like it came from a tired but competent engineer.

That is exciting. It is also exactly where teams start making bad decisions.

The question is not “can the agent change the code?” We already know it can. The better question is: if the change breaks something three days later, can you reconstruct what happened without guessing?

If the answer is no, you do not have a production workflow yet. You have a demo with commit access.

The chat transcript is not enough

A chat transcript feels useful while the work is happening. It shows the prompt, the reasoning-ish conversation, and some intermediate steps.

But it is a weak operational record.

It usually does not tell you, in one clean place, which files were touched, which commands ran, which checks failed, which warnings were ignored, which constraints were given, which human approved the risky bit, or how to roll the change back.

That matters because production incidents do not happen in the same mood as demos. Nobody wants to scroll through a long agent conversation at 02:00 while trying to work out why a payment path changed.

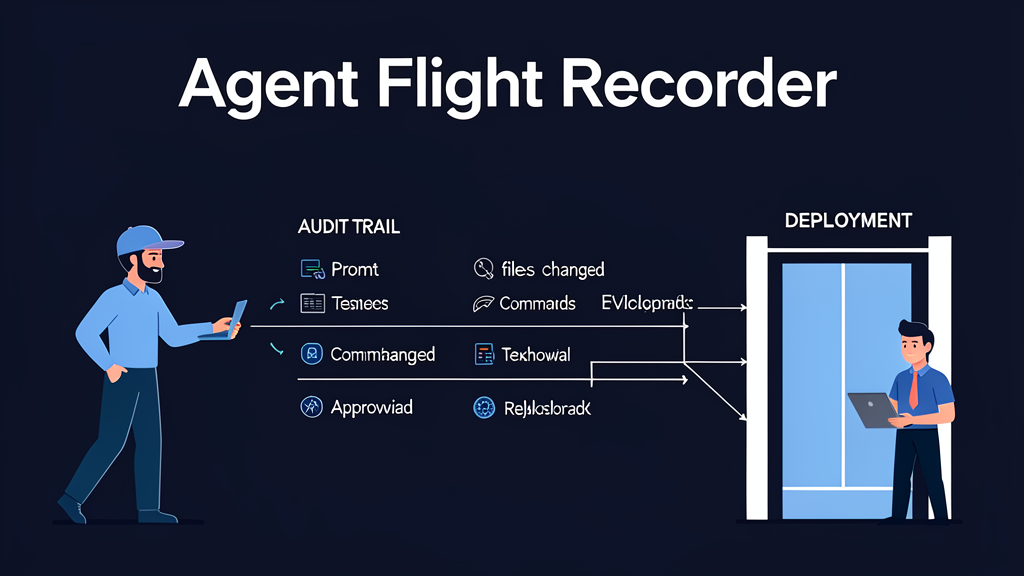

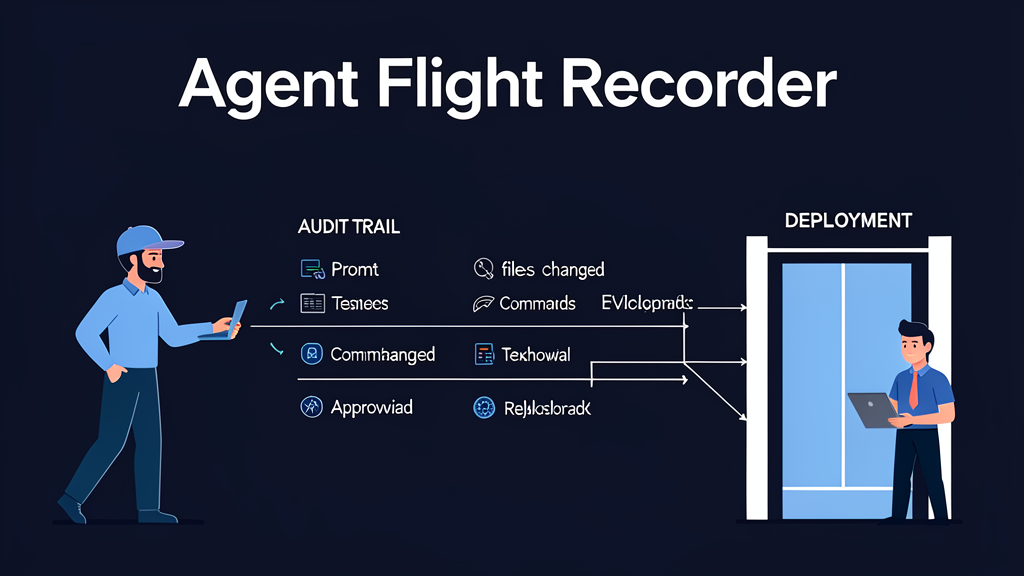

Teams need something closer to a flight recorder.

What an agent flight recorder should capture

For Claude Code work, I would want every serious task to leave behind a compact artifact trail. Not a novel. Not a raw dump of everything the model said. A record that another engineer can inspect quickly.

At minimum:

- the original task intent

- the boundaries the agent was given

- the repo, branch, and starting commit

- files read and files changed

- commands executed

- tests and checks run

- failures, retries, and skipped checks

- dependency or configuration changes

- human approvals

- the final diff or pull request

- rollback notes

That list sounds boring. Good. Boring is what you want when an automated actor is changing a codebase.

The artifact trail gives reviewers something concrete to evaluate. It also gives the next agent run better context. Without it, every run starts with a foggy memory of what happened last time.

The dangerous gap: “the tests passed”

“The tests passed” is not an artifact trail.

Which tests? On which branch? Before or after the agent changed dependencies? Were integration tests skipped because Docker was unavailable? Did the agent rerun the failing test after its fix, or only run a narrow unit test that happened to pass?

This is where agentic coding gets slippery. The agent can produce a confident summary, and that summary may be mostly true. Mostly true is not enough when you are deciding whether to merge.

A production workflow should record the evidence, not just the conclusion.

For example:

Task: add server-side validation to signup endpoint

Boundary: no schema changes, no auth flow changes

Starting commit: 9f41c2a

Files changed: api/signup.ts, api/signup.test.ts

Commands run:

npm test -- signup

npm run typecheck

Failed checks:

npm test -- signup failed once: duplicate email case expected 409

Retry:

fixed response mapping, reran signup tests

Final checks:

npm test -- signup passed

npm run typecheck passed

Human approval:

approved by Thomas for PR creation, deployment not approved

Rollback:

revert PR #184 or restore api/signup.ts from 9f41c2a

That is not fancy. It is useful.

Observability before autonomy

A lot of Claude Code discussions jump straight to autonomy: how much can the agent do without asking?

I think the better order is observability first, autonomy second.

If you cannot see what the agent is doing, you should not expand what it is allowed to do. More autonomy without a better record just makes the blast radius harder to understand.

Start with read-only exploration. Then allow narrow edits. Then allow test execution. Then allow pull request creation. Treat deployment, infrastructure changes, secrets, billing, migrations, and production data as separate permission levels.

The flight recorder should change as the permission level changes. A small docs edit does not need the same record as a migration. A dependency upgrade deserves more evidence than a typo fix.

Human review gets better when the record is good

The point of an artifact trail is not to bury humans in paperwork. It is to make review less theatrical.

Without a record, the reviewer is stuck reading the diff and trusting the agent’s summary. With a record, the reviewer can ask sharper questions:

- Did the agent stay inside the requested boundary?

- Did it touch files it did not mention?

- Did it run the checks that matter for this code path?

- Did it silently accept a weaker design after a failure?

- Is rollback obvious enough for the risk level?

This is where human judgment still matters. The agent can do the mechanical work. The human has to decide whether the trade-off is acceptable.

Good records make that decision faster and less vibes-based.

The artifact trail becomes training data for the workflow

There is another benefit: the artifact trail shows where the process is bad.

If agents keep skipping the same integration test, fix the harness or make that check mandatory. If they keep touching too many files, tighten the task boundary. If reviewers keep asking the same question, add that field to the record. If cost spikes on broad exploration tasks, split discovery from implementation.

This is the part of production agent work that interests me most. You are not just prompting a model. You are designing a system that learns how to use the model responsibly.

The transcript is a conversation. The flight recorder is an operating asset.

A simple rule for Claude Code teams

Before giving a Claude Code agent more permission, ask this:

If this run causes a bug, what record will help us understand it quickly?

If the answer is “the chat probably has it somewhere”, slow down.

Build the artifact trail first. Then increase autonomy.

That one habit changes the conversation from “look what the agent can do” to “look what our engineering system can safely absorb”. That is the difference between a clever demo and a production workflow.

I am writing more about this in Claude Code: Building Production Agents That Actually Scale. The book is about the engineering layer around Claude Code: permissions, tool governance, evaluation, observability, cost control, and human review.

Related: Claude Code Is Not the Product: The Production Loop Is.